the Creative Commons Attribution 4.0 License.

the Creative Commons Attribution 4.0 License.

Developing Guidelines for working with Multi-Model Ensembles in CMIP

Anja Katzenberger

Jhayron S. Pérez-Carrasquilla

Keighan Gemmell

Evgenia Galytska

Christine Leclerc

Indrani Roy

Arianna Varuolo-Clarke

Milica Tošić

Nina Črnivec

Earth System Models (ESMs) are a key tool for studying the climate under changing conditions. Over recent decades, it has been established to not only rely on projections of a single model but to combine various ESMs in multi-model ensembles (MMEs) to improve robustness and quantify the uncertainty of the projections. The data access for MME studies has been fundamentally facilitated by the World Climate Research Programme's Coupled Model Intercomparison Project (CMIP) – a collaborative effort bringing together ESMs from modelling communities all over the world. Despite the CMIP standardization processes, addressing specific research questions using MMEs requires unique ensemble design, analysis, and interpretation choices. Based on the collective expertise within the Fresh Eyes on CMIP initiative, mainly composed of early-career researchers engaged in CMIP, we have identified common issues and questions encountered while working with climate MMEs. Here, we provide a comprehensive literature review addressing these questions. We provide statistics tracing the development of the climate MMEs analysis field throughout the last decades, and, synthesizing existing studies, we outline guidelines regarding model evaluation, model dependence, weighting methods, and uncertainty treatment. We summarize a collection of useful resources for MME studies, we review common questions and strategies, and finally, we outline emerging scientific trends, such as the integration of machine learning (ML) techniques, single model initial-condition large ensembles (SMILEs), and computational resource considerations. We thereby aim to support researchers working with climate MMEs, particularly in the upcoming 7th phase of CMIP.

- Article

(5512 KB) - Full-text XML

-

Supplement

(509 KB) - BibTeX

- EndNote

Earth system models (ESMs) are a key tool for assessing the future climate under changing conditions. Starting from the seminal work of Manabe and Hasselmann (e.g. Manabe and Strickler, 1964; Manabe and Bryan, 1969; Manabe and Wetherald, 1967; Hasselmann, 1976), who were awarded the 2021 Nobel Prize in Physics, climate models have continuously evolved over decades. During this process, models have become progressively more complex, encapsulating processes related to aerosols, atmospheric chemistry, the carbon cycle, and ocean biogeochemistry. This evolution occurred in parallel with advances in Earth system observations, high-resolution numerical models giving insight into smaller-scale phenomena (e.g., detailed radiative transfer models, cloud-resolving models, large-eddy simulations), and growing computational power (e.g. Gettelman et al., 2022; Schneider et al., 2017) allowing model resolution to steadily improve.

Since the beginning of large-scale atmospheric modelling, intercomparisons among models have been carried out. Initially, this intercomparison was mostly performed for numerical weather prediction as computational resources limited the intercomparison of studies in the climate context, and a clear experimental strategy was lacking (Gates, 1992). Since the 1970s, the Working Group on Numerical Experimentation (WGNE), supporting the World Climate Research Programme, has organized several intercomparison projects among climate models. The first international systematic intercomparison framework for climate models was established in 1990 in the context of the Atmospheric Model Intercomparison Project (AMIP; Gates, 1992). In the early 1990s, the Intergovernmental Panel on Climate Change (IPCC) provided an intercomparison of atmospheric models in their first assessment report (AR; Gates, 1992). In the following years, Räisänen (1997) advocated the need for quantitative model comparison and raised the thought that the agreement between models can indirectly serve as a measure for the reliability of the simulations. Accordingly, Räisänen and Palmer (2001) introduced a probabilistic perspective on MME projections. The authors quantified the probability of specific climate events happening based on 17 coupled atmosphere-ocean general circulation models (AOGCMs). Contemporaneously, AMIP was followed by the Coupled Model Intercomparison Project (CMIP) coordinated by the World Climate Research Programme (WCRP), which also incorporated results from AOGCMs (Meehl et al., 2000).

While the first phase of CMIP was limited to control runs, new standardized scenarios were incorporated throughout the phases of CMIP with an increasing number of international model centres contributing simulations. Concurrently, the volume of data has been steadily increasing (Williams et al., 2016) and is stored within a standardized format at the Earth System Grid Federation (ESGF) central repository (Cinquini et al., 2012). In more recent CMIP generations, a variety of supporting experiments is conducted (e.g. Eyring et al., 2016), including paleoclimate runs (simulations of the `distant past'), historical runs (simulations of the “recent past”), control runs to study natural variability, as well as various experiments. Finally, future climate change simulations are performed for various greenhouse gas emission scenarios such as abrupt carbon dioxide doubling or quadrupling to derive climate sensitivity (measure of how much the Earth's climate system will warm under a doubling of atmospheric CO2 concentration), as well as for multiple plausible future emission scenarios (O'Neill et al., 2017; Riahi et al., 2017; van Vuuren et al., 2025). The latter denote diverse scenarios of evolution of the global society (including population, economy, and technology) which thus lead to differing emissions of greenhouse gases (CO2, CH4, NO2) and other air pollutants until the end of the 21st century and are associated with different climate change mitigation and adaptation policies and challenges (IPCC, AR6). These CMIP projections have proven essential for informing mitigation and adaptation strategies to climate change at the global and regional scales (Meehl et al., 2000). For regional analysis, the CMIP output is often downscaled to finer resolution, e.g. by using the CMIP output as boundary conditions for regional climate models (RCMs). This is done e.g. in the WCRP COordinated Regional climate Downscaling EXperiment (CORDEX), which provides a coordinated framework for producing and evaluating regional climate projections across multiple domains worldwide (Giorgi, 2019; Gutowski et al., 2016)

The main components of an ESM describe the atmosphere, ocean, cryosphere, land, and increasingly, the carbon cycle and other biogeochemical processes. Each component involves a variety of interacting phenomena occurring at a wide range of spatial and temporal scales (e.g. Gettelman et al., 2022). In all ESMs, the continuous behavior of the atmosphere and ocean is first discretized in space and time via the so-called “model dynamical core” which encompasses known governing equations that capture resolved (grid-scale) phenomena and parameterization schemes that represent unresolved or poorly understood (subgrid-scale) processes. ESMs differ in the choice of computational grids (e.g., latitude-longitude structured grids, icosahedral grids, variable resolution cube-sphere grids), numerical methods for solving the dynamical core equations, and in parameterization schemes. While some parameterizations are based on well-established physical theory, others, particularly those related to clouds or turbulence, remain subject to substantial uncertainty. In addition, computational limitations restrict the accuracy with which models can represent certain relevant processes. Therefore, the decisions made at modelling centers make each ESM an imperfect attempt to represent a multitude of highly complex, nonlinear processes, and the synchronized interplay among them. Depending on the interest of the end user, some of these necessary idealization decisions may be more suitable than others.

Combining several ESMs to multi-model ensembles (MMEs) can have numerous advantages compared to individual simulations, e.g. to account for the uncertainty arising from the differing modelling decisions (model uncertainty). Starting in the weather forecasting community, numerous studies have shown the benefits of ensemble predictions compared to predictions based on single models (Doblas-Reyes et al., 2003; Krishnamurti et al., 1999), e.g. the North American MME showed improvements in various skill metrics (correlation, RMSE, RPSS, and reliability) compared to individual models used before (Kirtman et al., 2014). Inspired by these findings, studies in the climate context also analyzed the potential benefits from working with MMEs for projections. In climate model evaluation, the MME projections have proven to outperform individual model projections in numerous studies e.g. regarding the mean (Gleckler et al., 2008; Knutti et al., 2010a; Lambert and Boer, 2001; Palmer et al., 2005; Phillips and Gleckler, 2006; Pincus et al., 2008; Reichler and Kim, 2008) and variability (Zhang et al., 2007). The enhancement of the signal and cancellation of errors contribute to these advantages (Doblas-Reyes et al., 2005; Hagedorn et al., 2005; Smith et al., 2013). Becker et al. (2022) highlight the practical advantage of the continuous operation of MMEs, which can be maintained even when individual modelling centers are temporarily unable to contribute, for example due to technical or political constraints. They further provide an example where the use of a MME enabled the identification of outlier behavior in ENSO predictions, which could subsequently be traced back to previously unknown deficiencies in the underlying reanalysis dataset, thereby supporting the model improvement. Furthermore, an ensemble approach reduces the risk of selecting a model outlier with particularly large biases.

Given these benefits, MME projections have become an established tool for climate studies addressing a broad range of research questions, also being the standard method to analyze and present results in the Assessment Reports (ARs) of the Intergovernmental Panel on Climate Change (IPCC), where the state-of-the-art knowledge on climate change is reviewed. For researchers, MMEs provide an efficient way to get an overview of general tendencies for specific questions. Also for non-experts, presenting results in a synthesized format as e.g. in the context of MME also facilitates accessibility and interpretation (Knutti et al., 2010a), underlining the benefits of MMEs for the users.

It is important to recognize that CMIP constitutes an “ensemble of opportunity” (Tebaldi and Knutti, 2007; Sanderson and Knutti, 2012; Merrifield et al., 2023), as it reflects the collection of readily available simulations rather than a systematically designed sample. Contributing institutions range from long-established, well-resourced climate modelling centers to newer groups with sufficient computational resources to run adapted versions of existing models. While this inclusivity broadens participation, such ensembles of opportunity are not designed to constitute a statistically representative sample of multi-model uncertainty (Merrifield et al., 2023). In this context, the superiority of MMEs is not universal. There are cases in which individual models can outperform the ensemble mean, for instance when the averaging inherent to MMEs suppresses relevant signals that are well represented in only a subset of models. This can occur for specific physical processes, or extremes, where ensemble averaging may smooth physically meaningful variability or dampen circulation-driven responses. Moreover, if most models in an MME share common structural components, parameterizations, or tuning strategies, systematic biases can persist in the ensemble mean. In such cases, individual models with alternative formulations may provide more accurate representations for specific variables, regions, or applications.

The availability of standardized climate model outputs facilitated model intercomparison and has naturally inspired the use of MMEs since the beginning of the 2000s (Tebaldi and Knutti, 2007). Consequently, the AR3 of the IPCC (2001) presented many results based on MME means, accompanied by measures of inter-model variability (Tebaldi and Knutti, 2007). In the AR4 of IPCC (2007), model projections were only included if the models were successors from previous generations, thus a model selection de facto has taken place (Knutti et al., 2010b). To support IPCC lead authors for the AR5 and later, a “Good Practice Guidance Paper” was published in 2010, summarising current recommendations for the work with MMEs (Knutti et al., 2010b).

In the meantime, numerous studies have proposed diverse methods for MME studies. However, it is challenging to have an overview of these studies, and there is still a lack of guidelines on how to combine models within MMEs (Herger et al., 2018). The design of MME studies involves a set of decisions related to model selection, weighting, and uncertainty measures. Each of these decisions requires careful consideration of a broad range of aspects and often entails compromises that differ depending on the research question. This individuality makes it challenging or even impossible to establish universally applicable guidelines for MME studies. However, we believe it is valuable to give an overview of the key aspects to consider, and in some cases, present approaches that the Fresh Eyes on CMIP community has found to be useful. With this, we hope to support researchers that have newly entered the field of climate science, but also to provide an overview of existing resources and approaches for more experienced scientists, particularly for (but not restricted to) the upcoming 7th phase of CMIP.

While the focus of this paper is on the challenges associated with combining various ESMs within a MME, it should be pointed out there are other types of climate ensembles. Besides such uninitialized simulations, there are initialized climate model ensembles that are routinely used for seasonal prediction (see e.g. Becker et al., 2020, 2022; Buontempo et al., 2022; Kirtman et al., 2014; Min et al., 2025). Initialized climate model ensembles are based on accurate initialization and thus have an emphasis on assimilation procedures to capture the atmosphere, ocean and land conditions. While their goals differ from those of CMIP, initialized prediction ensembles face similar challenges related to ensemble design, model weighting, and evaluation against observations. Further ensemble types include initial condition ensembles (ICEs) and perturbed parameter ensembles (PPEs) (IPCC, AR5). ICEs are generated with a single climate model using varying initial conditions (i.e., perturbed initial state) to address the uncertainty due to natural or internal variability. If sufficiently many ensemble members are available, they are referred to as Single Model Initial-condition Large Ensembles (SMILEs). The perturbed parameter ensemble (PPEs) also compares multiple realizations from a single climate model, but in this case, a set of chosen physical parameters which are assumed to affect the quantity of interest (e.g., global mean surface temperature) is systematically varied to quantify the effect on model outcome (e.g. Eidhammer et al., 2024; Sexton et al., 2021). This enables a systematic exploration of intra-model uncertainty. Finally, the so-called grand ensembles are based on a combination of various ensemble types (IPCC, AR6).

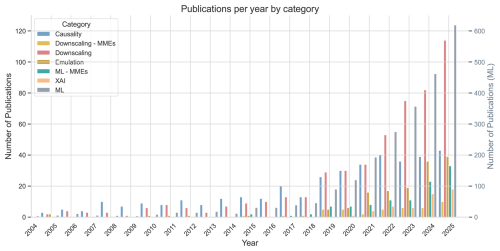

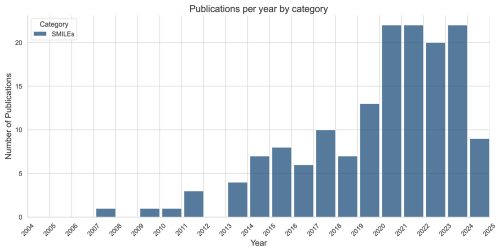

In the following section, we conduct a comprehensive literature review on studies regarding model evaluation (Sect. 2.1), model dependence (Sect. 2.2), model selection and weighting methods (Sect. 2.3) and uncertainty characterization (Sect. 2.4). In this context, we also provide a summary of useful tools for MME analysis (Sect. 2.5). In Sect. 3, we complement these guidelines with a collection of frequently occurring topics and challenges based on the experience of the WCRP Fresh Eyes on CMIP community. In Sect. 4, we discuss emerging trends for working with MMEs such as machine learning (ML), SMILEs and the necessity for more awareness of computational resources associated with MME studies.

Over 84 General Circulation Models (GCMs) from at least 43 international institutes are available through CMIP (https://wcrp-cmip.org/map/, last access: 14 April 2026). When addressing any research question, the need for specific variables, scenarios, resolutions or experiments narrows the pool of available models. However, the remaining number is often still large, prompting the following questions: Should all available models be used, or only a subset? How can the models be identified that are most suitable for such a subset? The two primary objectives when selecting models are to optimize model performance and to reduce duplicated information (Herger et al., 2018). As adequate selection criteria are central to the design of MME studies, we aim to provide guidance for the choice of models in this section.

2.1 Model Evaluation

Model evaluation refers to the systematic assessment of climate model simulations against observational reference data in order to compare model performance and identify biases. For an overview of model bias see Supplement S2. In practice, this involves benchmarking historical simulations with respect to observed climate statistics, such as mean states, variability, spatial patterns, and relevant physical processes.

2.1.1 Observation Datasets for Model Evaluation

Observational reference datasets used for model evaluation include both direct observations and reanalysis products. Reanalysis datasets are physically consistent products produced by assimilating diverse observational data into a numerical weather or climate model. They combine the broad spatial and temporal coverage of models with observational constraints and are therefore widely used as reference datasets. Direct observations include paleoclimate data, ground-based measurements over land and ocean (e.g., ships, buoys and sail drones), aircraft and balloon measurements, and satellite data. Paleoclimate data give insight into the state of the Earth's climate hundreds to millions of years ago, offering valuable constraints for paleoclimate simulations that help us understand recent and future climate change in the context of longer-term climate variability. For the more recent past, most of the reference observations originated in land in-situ measurements, which are not equally distributed around the globe (e.g., there are more land measurement stations in the Northern Hemisphere than in the Southern Hemisphere). The advent of Earth observation satellites has revolutionized the availability and coverage of global reference datasets. However, satellite datasets are limited to the time after the 1970s, depending on the variable of interest.

All these datasets have distinct advantages and disadvantages: They encompass different spatial and temporal scales, cover different locations and time periods, rely on different measurement techniques, or vary in accuracy. See e.g. Sippel et al. (2024) for challenges in observational data. Associated uncertainties also differ, e.g. due to instrument uncertainty, calibration limitations, or interpolation procedures. Accounting for these uncertainties in the reference datasets can be done by combining multiple datasets (Notz et al., 2016). It also facilitates signal detection for subsequent comparison with model ensemble outputs (Santer et al., 2008). Observational ensembles have been paired with MMEs in studies e.g. with regard to the tropical troposphere (Santer et al., 2008) or to Antarctic sea ice (Roach et al., 2018). Depending on the variable of interest, commonly used reanalysis datasets are ERA5 (produced by ECMWF), MERRA-2 (produced by NASA GSFC), NCEP DOE R-2 (produced by NOAA), JRA-3Q (produced by Japan Meteorological Agency).

Moreover, model evaluation using observations is not always straightforward, as observational sensors do not necessarily measure variables simulated by climate models. To ensure an “apple-to-apple comparison”, observed quantities must be converted into model-output-like variables, or vice versa. For example, software has been developed which enables simulating what a satellite would observe over the model atmosphere. Moreover, it must be assured that observations and simulations have the same temporal and spatial resolution, including the horizontal grid and number of vertical levels (Simpson et al., 2025), which can be achieved by appropriate regridding methods. See Section 3.5 for details on regridding.

Generally, there are two approaches to model evaluation: (i) The performance-oriented approach focuses on identifying the models whose output is closest to observations or reanalysis data. (ii) The process-oriented approach seeks models that best capture the dynamics of interest. Regardless of the approach, it is essential for any research project to report on the performance of all models available before applying any ranking or weighting methods, and the selection criteria should be reported transparently (Knutti et al., 2010a). Such evaluations are sometimes already available in the literature and can be referenced. But in that case it is important to make sure that they cover the variables, scales, and other factors relevant to the specific research questions.

2.1.2 Performance-oriented Evaluation

For shorter timescale forecasts, predictions can be verified within days as observations become available. This is typical of weather forecasting and initialized climate model simulations, in which models are started from observation-constrained initial conditions. Such near-term verifiability offers an opportunity to build confidence in models, particularly for climate services and decision-relevant applications. Although initialized and uninitialized climate projections address different time horizons, linking insights from both may help contextualize uncertainties and enhance trust in long-term projections. Climate projections addressing longer time scales cannot be directly verified in real time, as the relevant time scales (decades to centuries) preclude immediate verification. This is the case for uninitialized climate model simulations, which represent the standard approach for long-term climate projections and are the focus in this review. Accordingly, climate model performances are evaluated with reference to past and present-day climatology (Knutti, 2010).

Performance-oriented model evaluation is based on the assumption that models that fail to perform well for the past regarding some specific climate phenomena will also do so for the future. While this assumption is commonly accepted, it also is a limitation of this approach as the role of specific circulation patterns and their interactions might change throughout the 21st century. In this context, Knutti et al. (2010a) found that model performance evaluated for the past correlates only weakly with the magnitude of the projected change in the future, illustrating that constraining models based on past performance does not necessarily reduce future inter-model spread. Given these pitfalls, Mendlik and Gobiet (2016) propose to only remove the severely unrealistic models. A detailed assessment on how to deal with outliers can be found in Sect. 3.3.

Because uninitialized climate model simulations are free-running and not constrained by observations, performance-oriented evaluation cannot rely on a direct comparison of individual events or temporal trajectories. Instead, model evaluation is necessarily based on climatological characteristics, such as mean states or spatial patterns. Evaluating this climatological performance comes down to the choice of appropriate metrics. Model ranking has been found to be sensitive to this choice (Gleckler et al., 2008). However, for specific variables, the model projections may be largely independent of the choice of underlying metrics and ranking methods (Santer et al., 2009). Given the diversity of possible research questions, there is no single or combined performance metric that can reliably identify the “best” model independent of the research question. While this may sound disappointing since it prevents the standardization of model evaluation, it also has the advantage of reducing the effect of model convergence due to tuning (Knutti, 2010), which allows for a more reliable representation of future uncertainty and decreases the likelihood of making overconfident predictions. Generally, a metric is recommended if it's as simple as possible while at the same time being as statistically robust as possible, meaning that the dependence on specifications of the metric is rather low (Knutti et al., 2010b). Therefore, for any study, it is essential to use metrics that are relevant to the specific research question while also matching the spatial and temporal scale of the phenomenon in question.

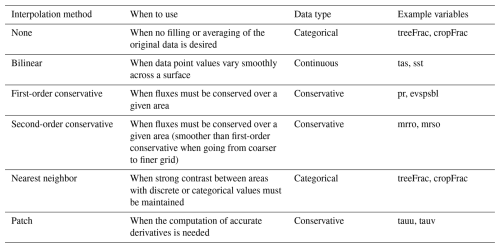

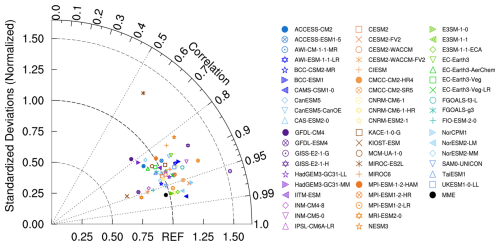

Taylor diagrams (Taylor, 2001) have become a widely used tool to visualize performance-oriented model evaluation, helping to identify better performing models as well as outliers. They are applied across the full range of climate-related topics, including e.g. the Indian Summer Monsoon (Roy et al., 2019) and seasonal mean temperatures (Tang et al., 2016). In a Taylor diagram, the radial distance from the origin represents the model standard deviation, the angle from the horizontal axis encodes the correlation with observations, and the geometric distance to the reference point (defined as the observed standard deviation and correlation = 1) equals the centered root mean square error, quantifying pattern mismatch after mean removal. Models closer to the observed standard deviation, along with higher correlation coefficients (and therefore lower root mean square error) are considered as better performing models for specific climate features (Taylor, 2001). The angular/azimuthal position in Taylor diagrams represents the pattern correlation coefficient between CMIP6 models and observations, while the radial distance indicates the ratio of the standard deviation of CMIP6 models to that from an observational data set. For example, the Western Pacific pattern, a prominent teleconnection pattern during the boreal winter over the North Pacific, was analyzed for 56 CMIP6 models using a Taylor diagram (Fig. 1, Aru et al., 2023). It depicts that the spatial correlations of the geopotential height anomalies at 500 hPa over the Western North Pacific between individual CMIP6 models and observations exceed 0.6. In reproducing spatial patterns, the mean of the MME outperforms most individual models, which is evidenced by a spatial root mean square deviation of 0.97. This diagram also makes it possible to identify outlier models, such as the MIROC-ES2L in this example. Selecting only the best performing models can improve the final MME mean.

Figure 1Example for the use of a Taylor diagram showing the geopotential height anomalies at 500 hPa over the Western North Pacific (20–80° N, 120° E–120° W) in individual CMIP6 models, MME and observations, taken from Aru et al. (2023).

A frequent challenge in climate model evaluation is determining whether models yield correct results for incorrect reasons, due to compensating errors (Eyring et al., 2016; Ivanova et al., 2016). There is a possibility that, while a model appears to accurately represent some variable, the underlying processes are not well-captured, which could mask inherent biases in the model. For example, analysing CMIP6 models, Zhao et al. (2022) reported that the cloud radiative effect reveals compensating errors between the modeled total cloud fraction and the liquid water path. These errors offset each other, resulting in a smaller net error in the cloud radiative effect. Di Luca et al. (2020a) addressed the issue of error compensation in CMIP5 simulations of hot temperature extremes by developing a new error metric called the “additive error.” This metric adds up the absolute errors of four components contributing to temperature extremes: the long-term mean, seasonality, diurnal temperature range, and the local temperature anomaly on the day of the extreme. Compared to traditional bias or absolute error metrics, the additive error more sensitively captures the total error in extreme temperature estimates. Furthermore, Di Luca et al. (2020b) defined a new error estimator that aims to minimise error compensation.

It is important to remember that models are calibrated with the aim to reduce anomalies compared to observational data before becoming available in new CMIP generations. During this calibration (often referred to as tuning), parameters, typically associated with unresolved processes such as clouds, convection, or boundary-layer dynamics, are adjusted to improve agreement with observations. Consequently, improvements in overall model performance in new CMIP generations do not necessarily stem from enhanced capabilities in capturing relevant processes, but may instead result from optimized calibration (Knutti, 2010). A related issue is that the same observational datasets used for model calibration are often also employed for model evaluation, which is not optimal as calibration and evaluation datasets ideally should be independent. This concern is even more pronounced when using reanalysis products as reference data, since climate models are an integral part of their generation.

Additionally, observational data can influence model performance through the forcings themselves. For example, in concentration-driven CO2 simulations, observed atmospheric concentrations are prescribed directly for historical simulations, rather than being computed from emissions, as in emission-driven models. This approach further constrains the model output, since the model does not simulate atmospheric CO2 concentrations from emissions via an interactive carbon cycle. Consequently, apparent improvements visible in the model's evaluation do not necessarily indicate a better representation of the carbon cycle itself.

Ideally, the evaluation process also allows insights on how well basic dynamic processes relevant to the research questions are reproduced in models (Knutti et al., 2010b). For a research question regarding rainfall, for example, this could mean to not only analyze the precipitation pattern, but also inspect wind patterns to see if the associated circulation is captured well. Process-oriented model evaluation specifically targets the model performance concerning such dynamics.

2.1.3 Process-oriented Evaluation

This evaluation approach shifts from traditional performance-oriented evaluation to more detailed, process-oriented metrics, which are critical for advancing the next generation of ESMs. Eyring et al. (2005) and Gleckler et al. (2008) emphasise the need to evaluate a wide range of climate processes, since accurately simulating one aspect does not ensure accuracy in others. These authors initiated the development of a comprehensive set of model metrics to assess important processes in climate simulations. Process-oriented evaluation identifies sources and limitations of predictability, guiding model development by revealing deficiencies in the representation of physical processes and thereby enhancing the reliability of climate projections (Eyring et al., 2016). By incorporating process-oriented analysis into diagnostic packages (examples in Sect. 2.5), evaluations become reproducible, accelerating model improvements and establishing benchmarks for progress. As with any standardization effort, however, such benchmarks must be applied with care, as they have the potential to promote model similarity. Another relevant resource in the context of process-oriented evaluation is Simpson et al. (2025), who review the ability of climate models to reproduce historically observed forced trends and outline best practices for confronting modeled and observed signals. Within the MME framework, process-based approaches help identify which processes contribute most to inter-model differences and provide insights into the mechanisms behind model performance. Here, we outline some common use cases and techniques.

Process-oriented diagnostics to reduce model bias: One major focus in the development of process-oriented metrics is the investigation of phenomena with strong bias in the models, as e.g. the Madden-Julian Oscillation (MJO), the dominant mode of tropical intraseasonal variability. To better understand the origins of these biases, a number of diagnostics has been developed to facilitate improvements in the representation of the MJO in weather and climate models (Ahn et al., 2020; Li et al., 2022; Wang et al., 2020). The first process-oriented multi-model comparison study on MJO teleconnections found that biases in simulating the position of the Pacific westerly jets, together with deficiencies in MJO representation, contribute substantially to errors in MJO teleconnections (Ahn et al., 2017; Henderson et al., 2017). Similar efforts exist for the El Niño–Southern Oscillation (ENSO), for which Planton et al. (2021) provide a dedicated metrics package.

Improving projections by process-oriented multiple diagnostic ensemble regression: Karpechko et al. (2013) developed the multiple diagnostic ensemble regression (MDER) method that constrains climate projections using observed diagnostics, applying it to Antarctic ozone columns. By identifying key processes that influence ozone, MDER explains a substantial fraction of the inter-model spread in projected ozone across climate chemistry models and outperforms the unweighted multi-model mean in pseudo-realistic validation. Building on this approach, Wenzel et al. (2016) applied the MDER algorithm, implemented as a diagnostic in ESMValTool (see Sect. 2.5), to analyze projections of the austral jet position under the RCP4.5 scenario in CMIP5 simulations. They found that MDER reduces uncertainty in the ensemble-mean projection without substantially altering the long-term mean position of the jet.

Identifying the role of model configurations: Another significant aspect of process-oriented model evaluation is understanding how specific characteristics are influenced by model configurations, such as resolution and parameterization schemes. Kim et al. (2018) proposed a set of diagnostics to assess how model physics affect the representation of tropical cyclones, particularly their intensity in GCMs. The findings suggest that model-specific factors, beyond large-scale environmental parameters, play a key role in shaping tropical cyclones' intensity, with differences in convection schemes contributing significantly to the inter-model spread. Wing et al. (2019) and Moon et al. (2020) further applied these methods, with Moon et al. (2020) showing that tropical cyclone wind structures are strongly influenced by model resolution. Accordingly, Dirkes et al. (2023) emphasizes the necessity of applying the developed diagnostics for tropical cyclone analysis in CMIP6 models.

Using idealization or a hierarchy of models: Another approach is to design model configurations that isolate individual processes and components, allowing to test their relevance for specific phenomena. For example, Katzenberger et al. (2024) employed an aquaplanet configuration with a circumglobal land stripe to evaluate the meridional circulation, particularly the Hadley cell, in an idealized setup. By shifting the landstripe north and southwards, and by modifying the surface albedo or aerosol concentrations, the role of these features in shaping monsoon dynamics could be systematically isolated. More generally, iteratively adding components and increasing the complexity and realism of the setup within a hierarchy of models enables the isolation of individual processes and the assessment of their contributions to the overall model performance. See also e.g. Zhou and Xie (2018) for more insights to this approach.

Using causal inference: In Section 4.1, we provide insights into how ML techniques can be applied to improve process-based evaluation by identifying causal relationships.

Another example of process-oriented assessment is provided by Fasullo et al. (2020), who present a thorough analysis of CMIP representation of the leading Earth system modes of variability. Additional applications include regime-based evaluation approaches of low-level marine clouds, where distinguishing stratocumulus from shallow cumulus regimes has helped diagnose persistent cloud-cover and radiative biases in CMIP6 and CMIP5 models and inform targeted model improvements (Črnivec et al., 2023; Cesana et al., 2023). Process-based analyses have also demonstrated that the ENSO–Indian Summer Monsoon teleconnection is robustly represented in CMIP5 and CMIP6 models, consistent with a realistic simulation of the coupled Hadley–Walker circulation and associated precipitation responses (Roy and Tedeschi, 2016; Roy et al., 2017; Fasullo et al., 2020). We provide further details and examples of process-oriented analyses in the Supplement S3.

2.2 Model Dependence

ESMs are developed by multiple modelling groups worldwide. Ideally, the models in a MME would be independent, thereby providing an adequate representation of the epistemic uncertainty. Historically, climate projections are derived by calculating simple averages across the MME, based on the assumption that the ensemble mean offers the most accurate representation of the Earth system by synthesizing the collective modelling efforts (Abramowitz et al., 2019; Knutti et al., 2010a). Assuming independence implies that the MME reflects a sufficiently broad range of uncertainties, and the averaging smooths out individual model biases. In practice, however, the development of ESMs is often not independent (Pincus et al., 2008).

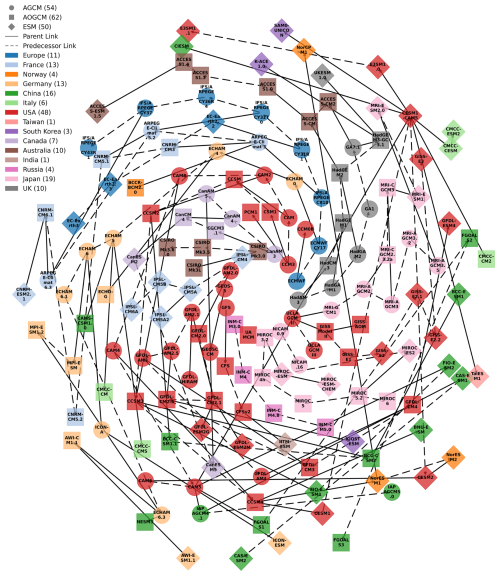

Components that address modelling challenges or have demonstrated strong performance are often shared among multiple ESMs, including e.g. the dynamical core for resolving grid-scale dynamics or components addressing sub-grid-scale phenomena (e.g., parameterization schemes). For example, the McICA radiation scheme (Pincus et al., 2003) provides an efficient and flexible representation of one-dimensional radiative transfer in a cloudy atmosphere, and is thus implemented in multiple ESMs such as several US models (NSF NCAR CESM2, NOAA GFDL-CM4, DOE E3SM-1-0), the Canadian model (CanESM5), the UK model (HadGEM3), and the Norwegian model (NorESM2). Similarly, the NEMO ocean model is widely used across modelling centers, including e.g. HadGEM3 and NorESM2, further underscoring the sharing of model components. Figure 2 illustrates the shared model history tracing back to a few AGCMs (Kuma et al., 2023).

Figure 2Spiral plot of climate model dependencies, adapted from Kuma et al. (2023). The oldest model in any given family is in the center of the plot, spiralling out as more models are made. Model type is differentiated by shape of marker, and link type is differentiated by arrow type (solid for parent or dashed for predecessor). Models developed in different countries are assigned distinct colors. Markers indicate atmosphere general circulation models (AGCMs), atmosphere-ocean global circulation models (AOGCMs), and Earth system models (ESMs). Numbers of models from each country are indicated in brackets in the legend. ECMWF models are denoted by the country “Europe”.

In addition to dependencies between modelling groups, individual centers often contribute multiple closely related model configurations, for example differing in horizontal resolution (e.g., MPI-ESM1.2-HR for high resolution versus MPI-ESM1.2-LR for low resolution) or in the inclusion of additional components, such as interactive vegetation in EC-Earth3-Veg compared to EC-Earth3. If such dependencies are not accounted for and all models are included with equal weight in a multi-model mean, modelling centers that provide several related configurations effectively receive greater weight than others. This issue is particularly relevant in multi-model studies with a limited number of included models.

Diverse analyses confirm that the number of independent models in CMIP is smaller than the total number of participating models (Jun et al., 2008; Masson and Knutti, 2011; Pennell and Reichler, 2011). Because errors across different models are often being correlated (Knutti et al., 2010a), the lack of independence can lead to amplified biases (Jun et al., 2008; Knutti, 2008; Reichler and Kim, 2008; Tebaldi and Knutti, 2007). Moreover, apparent convergence among model results and the associated reduction in ensemble uncertainty may be mistakenly interpreted as strong agreement between models, when in fact they arise from structural dependencies.

The lack of a universally accepted and unambiguous definition of model independence complicates efforts to systematically account for model dependence in MME studies. Some definitions focus on the conceptual idea of whether or not a model adds novel additional information to the MME (Masson and Knutti, 2011). Others adopt a more analytical approach to understanding model dependence, offering examples for evaluating model dependence and using their framework (e.g., Annan and Hargreaves, 2017). Despite such advances, no broadly accepted solution has yet emerged. Further approaches, such as weighting schemes (Sect. 2.3) have been proposed, but these tend to be problem-specific and struggle to capture the full complexity of model dependencies. The metadata reporting requirements introduced in CMIP6 have made comprehensive assessments of model dependence possible, thereby representing a meaningful advance in transparency. As new model generations are developed and incorporated to CMIP, continued efforts to quantify and correct for model dependence will be essential to ensure robust ensemble projections that reflect true uncertainty.

2.3 Model Selection and Weighting Methods

CMIP MME weighting and selection techniques are used to categorize the CMIP models based on historical model performance (see Sect. 2.1) and independence (see Sect. 2.2) using several metrics (Palmer et al., 2023), which is crucial for optimizing accuracy and reliability in projections (Strobach and Bel, 2020). Several performance-based and statistical approaches are used for MME weighting (Bhowmik and Sankarasubramanian, 2020; Brunner et al., 2020). Performance-based weighting assigns weights based on the ability to reproduce observed historical climate patterns, while statistical model weighting assigns weights based on properties like independence and spread (Brunner et al., 2020). Both approaches are discussed in this section, complemented by subselection approaches. Model weighting and subselecting to account for model outliers is discussed specifically in Sect. 3.3. It is also important to note that some studies may be primarily interested in assessing the overall performance of the full CMIP ensemble. Applying weighting or model subselection is not relevant to such analyses.

2.3.1 Accounting for Model Performance

Most studies in the literature use simple multi-model means, thus equally weighted MMEs to project future climate change impacts (Shuaifeng and Xiaodong, 2022). While such approaches capture the overall trends across all models, equal weighting without any model selection has been criticized for not considering model performance (Shin et al., 2020). Incorporating information on model skill – by emphasizing better-performing models and down-weighting or excluding models with poor simulation capabilities – can improve both the accuracy of projections and the assessment of uncertainty in CMIP MMEs (Merrifield et al., 2020).

Weighting, however, is a challenging process as the basis for weights must be determined and other not yet identified but equally relevant factors may be neglected. Moreover, the relevance of specific model features for a given phenomenon may change under future climate conditions, making it questionable to assign weights solely based on present-day performance, as discussed previously in the context of model evaluation. In addition, weighting schemes may inadvertently favor structurally similar models that produce “mainstream” results, while penalizing outlier models that could provide valuable insights (see also Sect. 3.3). When bias correction is applied, assessing model performance becomes particularly challenging, as differences between models and observations are largely removed, complicating performance-based weighting. Shin et al. (2020) addressed this issue by proposing a hybrid weighting approach that preserves performance information while avoiding unrealistically extreme model weights.

Despite these challenges, several studies have demonstrated the potential benefits of performance-based model weighting for climate projections. Tang et al. (2021) found that weighted MMEs produce more robust projections of extreme precipitation over the Indo-China Peninsula and southern China than unweighted ensembles. Similarly, Shuaifeng and Xiaodong (2022) applied a rank-based weighting approach to CMIP6 MMEs for projecting and quantifying uncertainty in cold surges over northern China. Brunner et al. (2020) discovered a reduction in the projected warming when applying model weighting because some models showing high future warming have systematically lower performance skills.

Another approach to account for model performance is the selection of a subset of models. This can also be considered as a weighting method, which uses the weight 1 for included models, and the weight 0 for excluded models. MMEs with optimized sub-selection can reduce the computational load and have been shown to decrease the ensemble-mean RMSE, e.g. by roughly 10 %–20 % for air temperature and approximately 12 % for precipitation relative to the full multi-model mean (Hamed et al., 2021; Herger et al., 2018; Snyder et al., 2024). The central challenges in subselecting are the identification of performance metrics, as already discussed in Sect. 2.1, as well as the definition of selection criteria that should be made transparent. Herger et al. (2018) compared different sub-selection approaches, including random ensembles, performance-based ranking, and optimal ensemble subselection, and found improved performance over the multi-model mean in some cases. In a random ensemble, multiple models are combined randomly without an explicit optimization strategy. In performance ranking, models are ranked based on metrics such as accuracy, Q-statistics, mean square error etc. In optimal ensemble sub-selection, a subset of models is chosen that maximizes performance. Almazroui et al. (2017) similarly found that a subset of the best-performing models showed better temperature and precipitation projections over the Arabian Peninsula. Numerous further examples exist, e.g. including the ENSO teleconnection (Roy et al., 2018) and lightning over South/South-east Asia (Chandra et al., 2022).

2.3.2 Accounting for Model Dependence

As discussed in Sect. 2.2, climate models are not fully independent. A common approach to address this issue is by weighting models based on their independence from others. Sanderson et al. (2015) developed a mathematical formulation to quantify model uniqueness and assign corresponding weights. Boé (2018) argues that model interdependencies are more effectively assessed through code similarity instead of through result similarity. Although evaluating source code similarity is indeed challenging (due to issues such as the complexity of model architectures, differing programming languages, licensing issues and proprietary restrictions), it has the potential to reveal shared model components and algorithms that may not be evident from model output comparisons alone. Recent model selection approaches also emphasize model independence (Snyder et al., 2024), with tools such as ClimSIPS explicitly accounting for model dependence (Merrifield et al., 2023). Assessing both source-code similarity and similarity in model results enables the identification of shared methodologies that can lead to correlated predictions, thereby highlighting potential redundancies within MMEs that may bias ensemble statistics. Integrating these measures into weighting schemes can therefore improve the robustness of MMEs and contribute to more reliable and less biased projections.

2.3.3 Combined accounting for Model Performance and Dependence

Knutti et al. (2017) proposed a model weighting method that accounts for model performance as well as model dependency. This method includes two distance metrics, from models to observations, and among models. Here the “effective repetition of a model” within an ensemble, outlined by Sanderson et al. (2015), is accounted for, along with the accuracy of a model with respect to observations.

2.4 Uncertainty Characterization

Model selection and weighting ideally improves uncertainty which remains inevitable when trying to predict climate (Knutti et al., 2019). Characterizing and understanding it is essential for guiding model evaluation and development, for science and risk communication, and for assessing climate impacts (Deser et al., 2012a; Deser, 2020; Snyder et al., 2024). When using future projections from CMIP, three types of uncertainty must be dealt with (Hawkins and Sutton, 2009; Lehner et al., 2020; Simpson et al., 2021): scenario or forcing uncertainty, natural variability uncertainty, and model uncertainty. The scenario uncertainty arises because it is not known how human emissions of greenhouse gases and other pollutants from all over the world will develop in the future, and it is accounted for by modelling different emission scenarios (O'Neill et al., 2014; van Vuuren et al., 2025). Natural or internal variability uncertainty is due to the chaotic and, thus, unpredictable evolution of the climate system (Deser et al., 2012b), having a great impact on climate projections (Lehner and Deser, 2023). The unique realization of our future climate is the response to the combined effect of anthropogenic forcing and internal Earth system variability. Although internal variability uncertainty cannot be reduced, it is quantifiable (Deser, 2020), and using large ensembles of a single model is helpful for this purpose (Tebaldi et al., 2021). Finally, the third type – model uncertainty – results from our imperfect attempts to predict the aforementioned real world realization. It includes differences among models as well as the varying results that can be obtained within the same model when varying its parameters. While model uncertainty can be reduced, its interpretation and quantification depend strongly on how the ensemble is constructed (Knutti et al., 2019). An adequate understanding of uncertainty has the potential to help MMEs users with model selection and thereby reduce computational burdens (Snyder et al., 2024).

Decomposing the total uncertainty of climate estimates into contributions from scenario, internal, and model uncertainty provides insights into projections' reliability and potential for reducing uncertainty. This process is called uncertainty partitioning, and it often involves quantifying the consistency among different members of a MME (Hawkins and Sutton, 2009; Lehner et al., 2020; Woldemeskel et al., 2012; Yip et al., 2011). For long-term means of climate data, Hawkins and Sutton (2009) proposed a widely used method for uncertainty partitioning: they fit a polynomial to each model's output in the time dimension to separate the forced response from the internal variability. The variance across different model's polynomials corresponds to the model uncertainty, and the mean of the different residuals across models represents the internal variability. Finally, the scenario uncertainty is the variance across multi-model means for different forcings. This method assumes (i) that the forced response can be approximated by the polynomial and (ii) that the arithmetic sum of the different uncertainties comprises the total uncertainty.

To consider the potential non-additive nature of the total uncertainty (ii), Yip et al. (2011) used analysis of variance (ANOVA) – an approach that partitions the total variance into components due to different sources of variation – to improve the uncertainty partitioning. Woldemeskel et al. (2012) expanded the uncertainty quantification methodology to include also the spatial dimension, by introducing the Square Root Error Variance (SREV) method. This method has proven useful for highlighting regional differences in uncertainty. More recently, exploiting the increasing computational capabilities, Lehner et al. (2020) overcame the assumption of the polynomial fit (i) from Hawkins and Sutton (2009), which produced significant regional biases, by using several SMILEs. Instead of calculating the variance of the polynomials as in Hawkins and Sutton (2009), in this approach, the model uncertainty is calculated as the variance across ensemble means from the available SMILEs. This reduces methodological assumptions and thereby improves the results, making SMILEs currently a broadly used tool to partition uncertainty in climate projections. It is important to note that the lack of independence between models (Sect. 2.2), and the methods to account for it (Sect. 2.3) must also be considered in this context.

A question that should be considered, although it can only be partially answered, is whether the MME spread is realistic, too narrow or too broad. The uncertainty may be too broad if observations are not used correctly to tune models, or if the models have extensive and diverse structural errors. The ensemble may be too narrow, and thus overly confident if the models are structurally very similar, if they are overfitted to observations or if uncertain processes are missing. It is also important to recognize that present-day and future uncertainties arise from different sources: present-day uncertainty mainly reflects the models' ability to reproduce observations, whereas future uncertainty stems from variations in how models represent physical processes and feedbacks (Sanderson and Knutti, 2012). Care should be taken when assuming that the spread of present-day or historical simulations will be the same in the future.

As discussed in Subection 2.1, relying solely on how well a model reproduces past climate to assign confidence can be misleading: models that perform well historically may not accurately project future climate changes, while models that perform poorly may still provide useful information about future conditions (Hall et al., 2019). An evaluation and uncertainty reduction technique that avoids this bias is the development of emergent constraints (Hall et al., 2019). An emergent constraint refers to a statistically robust relationship across a MME between an observable present-day quantity (x) and a projected future change in a quantity (Δy), typically approximated as linear (Simpson et al., 2021). When this relationship is robust, observations of x can be used to constrain the plausible range of y, thereby reducing uncertainty. This is commonly achieved by analyzing the probability distribution function of y conditioned on the observed value of x. This method has been used for assessing the uncertainty of many processes within different Earth system components (Keenan et al., 2023; Nijsse et al., 2020; Shaw et al., 2024; Simpson et al., 2021; Smith et al., 2022; Thackeray et al., 2022). ML approaches have also been used to demonstrate a potential to discover and explore emergent constraints (Nowack et al., 2020). Despite the usefulness of emergent constraints, care should also be taken when interpreting the results, since the method assumptions may produce overconfident predictions and may be vulnerable to artifacts within the model (Breul et al., 2023; Sanderson et al., 2021), similar to other uncertainty reduction methods.

While climate models exhibit high confidence in thermodynamic aspects of climate change (e.g. global temperature increase) due to robust theoretical and observational evidence, dynamic aspects, particularly related to atmospheric circulation, present significant uncertainties due to their nonlinearity and feedbacks (Shepherd, 2014). Model uncertainties in these two components are uncorrelated (Zappa and Shepherd, 2017), meaning that errors in one component do not influence or predict the errors in the other, so separating them allows better understanding of where the biggest uncertainties lie.

2.5 Available Tools for MME Analysis

The analysis of CMIP datasets is greatly facilitated by a variety of tools developed within the global climate science community. However, the wide range of available tools was not centrally cataloged, making it difficult to obtain a clear overview of available tools and their capabilities. To address this gap, the WCRP CMIP has undertaken an effort to compile a central repository (https://wcrp-cmip.org/tools/, last access: 14 April 2026) that encompasses a broad range of resources.

The repository includes data access platforms (e.g., Earth System Grid Federation, Climate Data Store, IPCC data distribution centre, PANGEO, CAVA, Climate Information Portal), which facilitate accessing large and complex data volumes. It also lists widely used command line operators (e.g., ncview, NCO, CDO) and programming languages suitable for climate data analysis (such as Python, R, Julia), together with useful packages (e.g., multiple Python packages such as matplotlib, scipy, pandas, Iris, xarray, xGCM, xMIP, xclim, xCDAT, UXarray, Metpy, aospy). In addition, the repository contains several comprehensive evaluation and benchmarking tools, such as ESMValTool, bgcval2, RUBISCO, PCMDI Metrics Package, AMBER, and the MDTF Diagnostic Package. These evaluation tools include diagnostics designed to address specific scientific questions. For example, ESMValTool incorporates the Climate Variability Diagnostics Package (CVDP, Eyring et al., 2020; Phillips et al., 2020, 2014; https://github.com/NCAR/CVDP-ncl, last access: 14 April 2026), which facilitates the analysis of modes of climate variability and change in models and observations (Maher et al., 2024). Another important initiative in process-oriented evaluation is led by the Model Diagnostics Task Force (MDTF) under NOAA's Climate Program Office (CPO) Modeling, Analysis, Predictions, and Projections (MAPP) program. It promotes the development and use of process-oriented diagnostics (see Sect. 2.1) in climate and weather prediction models (Maloney et al., 2019; Neelin et al., 2023). Additionally, the WCRP repository includes various data analysis and visualization tools, including the IPCC WGI Interactive Atlas, Panoply, TempestExtremes, CAVA, TECA, KNMI Climate Explorer, and Google Earth Engine. Figure 3 highlights some of these tools, aiming to promote their use across the wider climate community. Basic information about each tool is provided by “Tools description cards” on the CMIP website, which include links to tool websites, documentation, tutorials and community support resources. Finally, it should be emphasized that the tools repository is actively maintained and continuously updated. To further enhance its utility for the broader climate science community, new contributions are highly welcome.

Figure 3Collection of useful tools for using climate data available at https://wcrp-cmip.org/tools/ (last access: 14 April 2026).

While the CMIP tool repository is a key resource for many widely used climate analysis tools, it does not cover all available resources. The wider open-source ecosystem – especially within the Python community – offers many additional tools and libraries for climate data analysis and is supported by a large and active scientific community on platforms such as GitHub.

Building on the general workflow involved in MME studies in Sect. 2, we draw on the experience within the Fresh Eyes community to identify common topics and challenges that arise in this context. All of these aspects are also relevant to the subsections in Sect. 2; however, our aim here is to provide a dedicated overview of specific topics, allowing researchers to access the most relevant information in one place.

3.1 Number of Models

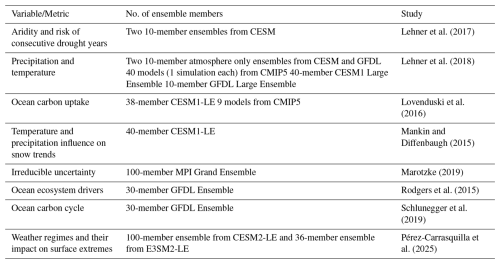

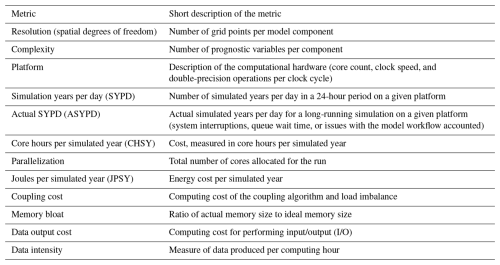

Any MME analysis has to face the question of how many models to include, which is not straightforward, as it involves the trade-offs between model diversity, computational cost, and the accuracy of the results. Increasing the number of ensemble members has the potential to enhance the robustness of the results by reducing statistical uncertainty. At the same time, state-of-the-art climate models remain computationally expensive. Downloading and processing these large datasets, particularly in the context of major intercomparison projects like CMIP, is also a resource-intensive challenge that limits the number of models included in MME studies. These challenges raise the question how many models are actually required to form a “good” ensemble size. Here, we focus on the number of models within a MME. A closely related question exists in the context of large ensembles where the number of perturbed simulations is discussed. Some examples for the number of simulations in large ensembles are provided in Sect. 4.2. However, many of the arguments and findings in this section apply for both contexts.

3.1.1 Lower threshold of ensemble size: At least 5 models

If the ensemble size is too small, the inter-model may not be fully captured. This has the potential to lead to an underestimation of uncertainties and can consequently result in an overestimation of the models' performance and thus an overconfident interpretation of the results. It is even possible that a too small ensemble size leads to qualitatively different findings, as shown by Milinski et al. (2020). In this study, the subsets of two and three models showed a warming after a volcanic eruption, while the actual known response would be cooling. So, how many models or simulations should be used as a minimum? Several studies have shown that the error (e.g. root mean squared error when compared to reference data) is reduced substantially up to about five models in different contexts (Herger et al., 2018; Knutti et al., 2010a; Mendlik and Gobiet, 2016; Milinski et al., 2020; Steinman et al., 2015). Adding further models is generally beneficial, but the improvement per additional model is much smaller. Mendlik and Gobiet (2016) find that the subset size can be reduced from 25 to 5 while still being representative for the entire ensemble. As these studies refer to different quantities and research questions, and were conducted independently, but still share five as a lower “threshold”, we propose five models/simulations as an initial baseline minimum for MME studies. Depending on the research question however, the minimum number of required models might vary. It can be determined by a specific method, as explained below.

3.1.2 Determining individual minimum ensemble size

If feasible, determining the appropriate minimum ensemble size on a case-by-case basis – depending on the specific research question and requirements – is preferable to adopting a general minimum. Milinski et al. (2020) proposed a procedure applicable to diverse research questions. After (1) defining the research question, (2) an error metric (e.g. RMSE) as well as a maximum acceptable error has to be decided. Then (3), the error for randomly sampled subsets of different sizes has to be quantified. The number of required models can now be identified as the smallest subset size that has an error below the chosen threshold (4). If the identified model number is less than half of the initial sample (e.g. the identified subset included 40, thus less than 50 members, when evaluating 100 members) the estimated subset size is robust (5). This requirement is introduced to avoid resampling bias, as random subsets close to the full ensemble share many members, are no longer independent, and therefore tend to reproduce the full-ensemble signal by construction rather than providing an unbiased estimate of the required ensemble size, see Milinski et al. (2020) for details. While this method provides a straight-forward method to identify the ideal minimum number of models in an ensemble, it requires the availability and analysis of a high number of model simulations. Consequently, this method might not be feasible for all studies.

3.1.3 Remarks for including more models

While the considerations above address the identification of a minimum ensemble size, additional models have the potential to further improve the model performance. For some applications, larger ensemble sizes are even required, e.g. for the quantification of internal variability, as estimating higher-order moments of the distribution demands a sufficiently large ensemble (Milinski et al., 2020). Generally, adding further models improves the statistical robustness of the MME analysis, but it has to be remembered that the added models should at least partly be independent of the existing models as otherwise only the weight of single models is increased without any physical reason (Knutti, 2010). See Sect. 2.2 for more details. A too large ensemble size has also the potential to increase the spread beyond a realistic range as the inclusion of outliers becomes more probable (Knutti, 2010). Section 3.3 therefore discusses strategies for identifying and handling outliers in more detail. Another consideration becomes relevant when working with different scenarios. As the range of uncertainty increases with the number of models, using the same number of models across all scenarios is essential to ensure comparability (Knutti et al., 2010a).

3.2 Extremes

Extreme weather and climate events have significant impacts on human society and ecosystems. Understanding the drivers and producing reliable future projections of these low-frequency high-impact events is therefore essential for effective climate change adaptation planning. When using MMEs to study extreme climate events, ensembles offer both strengths and challenges.

MMEs based on CMIP or CORDEX are widely used both in regional and global studies concerning climate extremes (Kim et al., 2020; Soares et al., 2023; Vogel et al., 2020; Yang et al., 2012). These studies typically apply statistical approaches, such as probabilistic modelling, and/or using climate extremes indices defined by the Expert Team on Climate Change Detection and Indices (ETCCDI). Extreme Value Theory (EVT) provides the theoretical foundation for analyzing extreme events by offering statistical methods to model the tails of probability distributions (Coles, 2001; DelSole and Tippett, 2022). One widely used approach within EVT is Generalized Extreme Value (GEV) distribution analysis (Rypkema and Tuljapurkar, 2021), a statistical framework for modelling the tail of the distribution of rare events. For example, GEV analysis is frequently used to estimate return periods of extreme rainfall events, allowing assessment of how the frequency and intensity of such events may change under future climate scenarios (Wehner, 2020). By fitting GEV to observed and modeled data, researchers can evaluate shifts in extreme event characteristics.

A major advantage of using the mean of the MME is that averaging across models reduces noise from internal variability and thereby amplifies the climate change signal. This can also help identify trends in extreme events (IPCC, 2021). However, the MME mean might not always be the best choice, particularly when examining the intensity and frequency of extreme events (Knutti et al., 2010b). Using MME's median or mean can sometimes mask the severity of local extremes, as averaging across multiple ensemble members can obscure the range of possible outcomes of individual extreme events. This is especially the case when some models predict significantly different extreme event trends, potentially leading to an underestimation of risks. Uncertainty remains for both hot and cold extremes, with some models deviating considerably from the multi-model mean. Uncertainties are particularly large for precipitation extremes, where, despite a general tendency toward heavier precipitation and longer dry periods, several models project opposing trends in specific regions (Sillmann et al., 2013).

In studies on climate extremes, it is therefore important to evaluate how well each model performs in simulating extremes (Kim et al., 2020; Sillmann et al., 2013) and to correct for biases when appropriate. However, as discussed in Sect. 2.1, model evaluation is conducted using performance-oriented or process-oriented approaches that generally tend to focus on the models' ability to capture mean climate states or large-scale circulation patterns rather than models' extreme event representation. Dedicated evaluations tailored to extremes are therefore required, capturing relevant temporal resolution, region and variables and comparing to an appropriate reference data set. For example, Kim et al. (2020) evaluated the CMIP6 MME against ETCCDI climate indices and identified systematic biases, such as a persistent cold bias in cold extremes over high-latitude regions. When comparing CMIP6 models with CMIP5, they found only limited improvements in simulating temperature and precipitation extremes, highlighting the need for further advancements in the understanding and representation of extreme climate events in ESMs. As a step following the evaluation, employing model weighting is one possible approach to address shortcomings, enhancing the accuracy and reliability of extreme event projections (Balhane et al., 2022).

When studying extreme climate events, uncertainty is another aspect that is important to account for. As discussed in Sect. 3.1, ensemble size strongly influences uncertainty estimates, with larger ensembles allowing a more complete sampling of the range of possible outcomes. In practice, many studies of climate extremes using MMEs rely on a single ensemble member per model to ensure comparability across models (Kim et al., 2020). However, using only one ensemble member per model could miss some of the variability in extreme events that larger ensemble runs could capture, particularly as often not too extreme members are submitted for intercomparison projects as CMIP. Nevertheless, given the constraints on computational resources and the availability of large ensembles, this method remains a common compromise.

While increasing ensemble size can help reduce uncertainties, it does not eliminate the limitations inherent to individual models. Downscaling techniques, either statistical or dynamical using RCMs, can provide higher-resolution data to improve the representation of extremes in specific regions. For example, the bias-adjusted high-resolution RCM outputs in the EURO-CORDEX project showed an improvement in the simulation of extreme temperature and precipitation indices across Europe, underscoring the value of RCMs for more reliable and region-specific climate projections (Coppola et al., 2021; Dosio, 2016). MMEs based on RCM projections are particularly valuable for highly vulnerable regions, offering insights into potential changes in local extreme events (Dosio, 2017; Tegegne et al., 2021) and supporting planning for challenges such as water scarcity, food security, and disaster preparedness. For more details regarding downscaling, see also Sect. 3.4.

3.3 Outliers

Outlier models have at times been disregarded in MME analyses because convergence toward the ensemble mean has been interpreted as a measure of model reliability. However, the use of convergence as a measure of model reliability has been criticized because it favors simulations that are closer to the multi-model mean, while underrepresenting uncertainty across a wider range of plausible outcomes (Tebaldi and Knutti, 2007). For example, the original version of the reliability ensemble average (REA) weighting method assigns higher weights to models that better reproduce the current climate, but also penalizes models that diverge from the ensemble mean (Giorgi and Mearns, 2002). As a result, outliers receive lower weights even when their differences may reflect physically plausible behavior rather than poor model performance. This is especially problematic because convergence toward the ensemble mean can partly arise from genealogical similarities among models, rather than independent confirmation of a result (Tebaldi and Knutti, 2007). Despite these concerns, there is a history of privileging convergence towards the MME mean within the climate science community. For example, in the third IPCC assessment report two models were discarded because of extreme warming projections associated with very high climate sensitivity (Tebaldi and Knutti, 2007). More recently, convergence-based ideas continue to influence MME subsetting approaches (Palmer et al., 2023) and are still used, at least in part, in MME evaluation frameworks (Amali et al., 2024). Whether emphasizing convergence is appropriate depends on the purpose of a given study. For applications such as the analysis of climate extremes, averaging across models can mask the full range of possible outcomes. In these cases, outlier models may provide valuable information about plausible high-impact scenarios rather than representing spurious deviations from the ensemble mean. See also Sect. 3.2 for more details on extremes in MMEs. Building on the insights on weighting and building subsets of models in Sect. 2.3, we discuss here in more detail how and when to account for outliers.

3.3.1 Exclusion of Outliers

One approach to account for outliers, is exclusion, meaning the removal of models with outlier status from an ensemble. While this can help reduce unrealistic spread and improve agreement with observations, it carries the risk of omitting simulations that represent rare but physically plausible events. In some cases, however, the benefits of exclusion outweigh the drawbacks. For example, Mudryk et al. (2020) identified outlier models for some seasons and regions in their study of snow cover change in the Northern hemisphere. These models, which overestimated snow cover in areas of low snow mass, were excluded to improve the alignment between observational data and CMIP6 MME projections. Similarly, the Swiss Climate Scenarios CH2018, based on EURO-CORDEX, excluded some outlier GCMs to narrow uncertainty ranges for temperature and precipitation (Sørland et al., 2020). The consequences of outlier inclusion or exclusion have been explored in the literature. Sun and Archibald (2021) compared “aggressive” and “conservative” approaches – respectively including and excluding outliers – and found that, for their study, the differences in results were relatively minor. Similarly, Bracegirdle and Stephenson (2012) presented analyses both with and without outliers to illustrate the sensitivity of polar warming estimates to outlier inclusion and different forms of regression. Overall, while models with outlier projections may be excluded to improve MME alignment with observations or to reduce uncertainty, this should be done with caution. Exclusion is most justified when a model is known to be deeply flawed. Otherwise, removing projections of rare but plausible events may limit the assessment of adaptation strategies and risk management options (Knutti, 2010).

3.3.2 Weighting or Penalization

MME inclusion of outlier models is often accomplished through weighting. One commonly used approach is weighting based on root-mean-square error (RMSE) skill scores. For example, Tegegne et al. (2020) preserve MME spread by applying the Katsavounidis–Kuo–Zhang algorithm to select ensemble members based on their contribution to representing the full range of variability within the sampling space for extreme indices of interest, as recommended by World Meteorological Organization's ETCCDI. The IPCC characterizes this approach as suitable for detecting “moderate extremes”–events expected to occur up to 10 % of the time (Seneviratne et al., 2012). In this approach, detecting extremes prior to taking the MME mean is useful for weighting members such that the full range of variability within the MME is largely preserved. To identify and characterize more extreme events, methods based on extreme value theory (EVT) are required. EVT focuses on values located in the very ends of tails of probability distribution functions (PDFs) and is therefore better suited to representing rare, high-impact extremes (DelSole and Tippett, 2022).

Among weighting methods rooted in classical statistics is the use of outlier insensitive methods. These are methods that retain outlier models while limiting their influence on the ensemble result. Such methods include using the ensemble median instead of its mean as a measure of the MME's center which reduces sensitivity to outliers (Ge et al., 2021). Rank based tests of statistical significance provide another option. Because they rely on the rank, or position of a value within a PDF, rather than the value of a particular data point within a sample, these are largely insensitive to outliers (DelSole and Tippett, 2022). This test is recommended by the World Meteorological Organization for hydrological data analysis and is robust to outliers and non-normal data distributions (Rojpratak and Supharatid, 2022).

Weighting methods based on Bayesian statistics have been developed to sample uncertainty across a broad statistical space. Compared to the frequentist statistics which uses a fixed population parameter to describe probability distributions, Bayesian statistics uses a conditional parameter that depends on the shape of the PDF for a given dataset (Clyde et al., 2022). Xu et al. (2019) apply Bayesian model weighting to statistically downscale precipitation data for site-specific analyses. The authors argue for the use of statistical downscaling due to its relatively low computational expense with finer spatial and temporal resolution data. At the same time, they note that dynamic downscaling can underestimate extremes and be overly sensitive to outliers. These limitations are addressed by applying a Bayesian weighted average, which reduces outlier influence while retaining information about uncertainty.